Kubernetes based Collector

The JupiterOne Integration Operator is a Kubernetes-native solution for running JupiterOne integrations within your Kubernetes cluster. It manages Custom Resource Definitions (CRDs) for integration management and provides a scalable approach for organizations already using Kubernetes.

Prerequisites

Cluster Requirements

- Kubernetes 1.16+

- Helm 3+

JupiterOne Requirements

- Account ID — found at

/settings/account-management - API Token — create one at

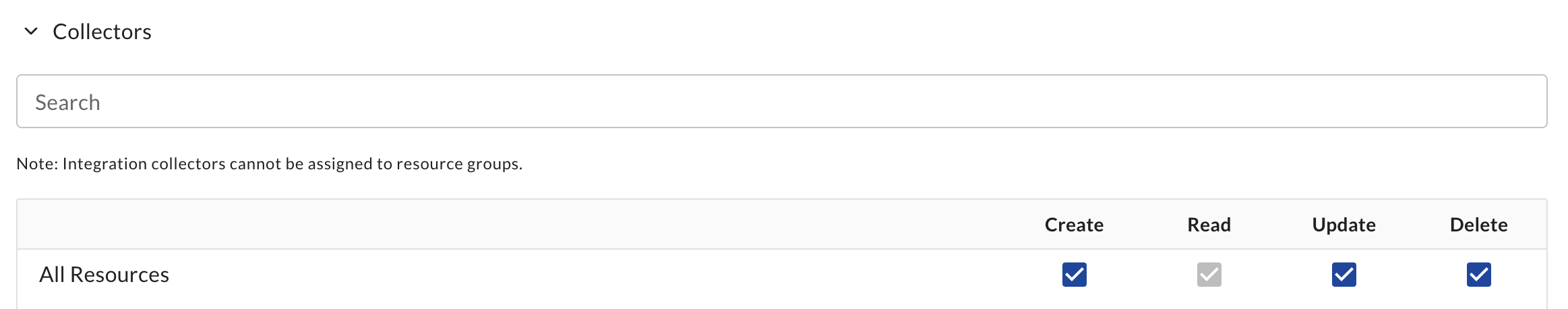

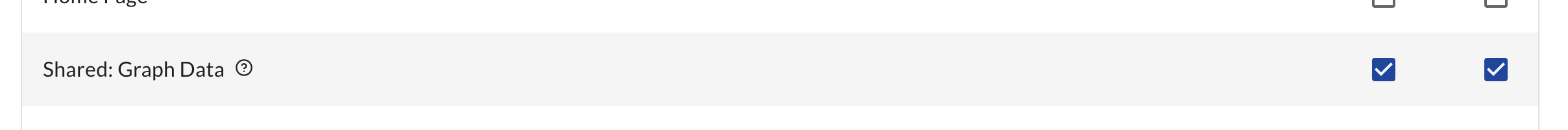

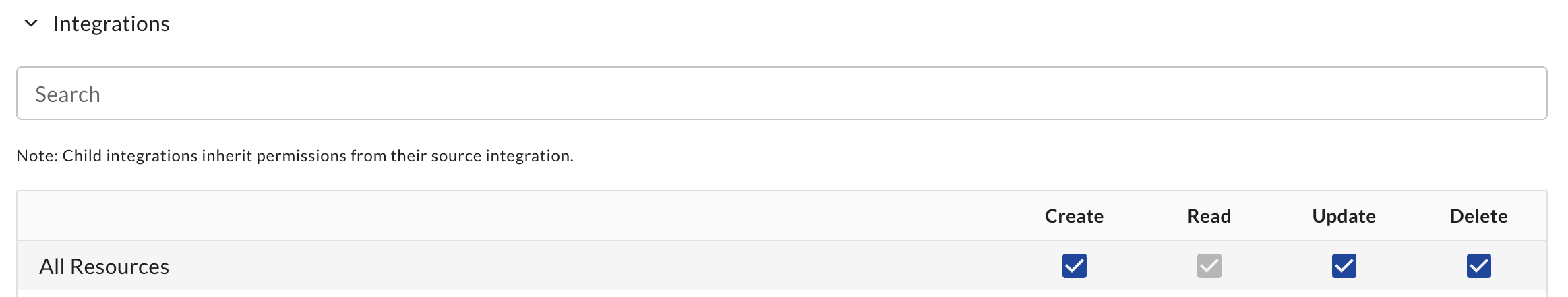

/settings/account-api-tokensor/settings/api-tokenswith the following permissions. If using a personal token (/settings/api-tokens), your user account must have these permissions assigned.

| Permission | Required | When |

|---|---|---|

| Collector Create/Read/Update/Delete | Yes | Always |

| Shared: Graph Data Read/Write | Yes | Always |

| Integration Create/Read/Update/Delete | No | Only if creating Integrations via Helm |

Permission screenshots

Installation

Installation is handled by two helm charts

- Kubernetes Operator This chart installs the controllers that manage the CRDs. This chart can be upgraded independently of the Integration Runner.

- Integration Runner This chart installs a Custom Resource

integrationrunnerthat tells the operator to create a new collector and register with JupiterOne.

Kubernetes Operator

First, we need to install the operator which manages all the CRDs.

-

Add the JupiterOne Helm Repository:

helm repo add jupiterone https://jupiterone.github.io/helm-chartshelm repo update -

Create a Namespace:

kubectl create namespace jupiterone -

Install the Integration Operator:

helm install integration-operator jupiterone/jupiterone-integration-operator --namespace jupiterone

Integration Runner

To install the Integration runner, you need to provide your API token. You can choose from two options for managing the API token secret:

The runner will create a Collector with the same name.

- Chart-Assisted (Recommended)

- External Secret (Advanced)

This is the simplest method. The Helm chart will automatically create a Kubernetes Secret in the same namespace with your API token.

Parameters:

runnerName: The name of the Runner.apiToken: Your API token. This will be created as a Secret in Kubernetes.accountID: Your JupiterOne Account ID.jupiterOneEnvironment(optional): The JupiterOne environment. This defaults tous. This can be found by inspecting the URL when accessing the UI such as<environment>.jupiterone.io.

Installation command:

helm install <runnerName> jupiterone/jupiterone-integration-runner --namespace jupiterone --set apiToken=<apiToken> --set accountID=<accountID>

This method references an existing Kubernetes Secret instead of creating one. Use this option if you manage secrets using external processes such as Sealed Secrets, External Secrets Operator, or other secret management tools.

The Secret must have a key of token in order to work properly.

Parameters:

runnerName: The name of the Runner.secretAPITokenName: The name of the existing Kubernetes Secret.accountID: Your JupiterOne Account ID.createSecret: Must be set tofalseso the Helm chart does not attempt to create the Secret.jupiterOneEnvironment(optional): The JupiterOne environment. This defaults tous. This can be found by inspecting the URL when accessing the UI such as<environment>.jupiterone.io.

Installation command:

helm install <runnerName> jupiterone/jupiterone-integration-runner --namespace jupiterone --set createSecret=false --set secretAPITokenName=<tokenName> --set accountID=<accountID>

Verification

After installation, the runner should register with JupiterOne within 30 seconds.

kubectl get integrationrunner -n jupiterone

Expected output:

NAME STATE DETAIL REGISTRATION AGE

runner running registered 2m38s

Private Registry Configuration

If your organization uses a private registry proxy, runs an air-gapped environment, or requires all container images to pass through security-scanned registries that mirror images from ghcr.io, you can configure the JupiterOne Integration Operator to pull integration job images from your private registry.

Prerequisites

Before configuring the operator for private registry support, create a Docker registry secret in the jupiterone namespace so that pods can authenticate against your private registry.

# Create a Docker registry secret in the jupiterone namespace

kubectl create secret docker-registry my-registry-secret \

--namespace jupiterone \

--docker-server=myregistry.example.com \

--docker-username=<username> \

--docker-password=<password>

# Verify the secret was created

kubectl get secret my-registry-secret -n jupiterone

The secret must exist in the jupiterone namespace where the operator runs. If you use external secret management tools (such as Sealed Secrets or External Secrets Operator), ensure the secret is synced to the jupiterone namespace.

Configuration

You can configure private registry support using --set flags:

helm install integration-operator jupiterone/jupiterone-integration-operator \

--namespace jupiterone \

--set controllerManager.imageRegistry=myregistry.example.com \

--set 'controllerManager.imagePullSecrets[0].name=my-registry-secret'

Or via a values file:

controllerManager:

imageRegistry: "myregistry.example.com"

imagePullSecrets:

- name: my-registry-secret

What these values do:

controllerManager.imageRegistry— Overrides the defaultghcr.ioregistry for integration job images. Images are pulled as<registry>/jupiterone/graph-<integration>:latest.controllerManager.imagePullSecrets— Applied to both the operator pod and all spawned integration job pods so they can authenticate against the private registry.controllerManager.disableImageSignatureCheck— Disables cosign image signature verification entirely. See Image Signature Verification below for when this is needed.

Image Signature Verification

The operator verifies the cosign signature of each integration image before running it. When a private registry is configured, the operator uses the following verification strategy:

- Try the configured registry first — if your private registry mirrors cosign signatures, verification succeeds immediately.

- Fall back to

ghcr.io— if signature verification fails against the private registry (for example, because cosign signatures are not replicated), the operator automatically retries verification against the originalghcr.ioimage reference where signatures are published. - Fail only if both checks fail — the image is rejected only when neither the private registry nor

ghcr.iocan provide a valid signature.

This means you do not need to mirror cosign signatures to your private registry, as long as the operator has network access to ghcr.io for signature verification.

Disabling signature verification

If your environment does not allow egress to ghcr.io and you do not mirror cosign signatures to your private registry, you can disable signature verification:

helm install integration-operator jupiterone/jupiterone-integration-operator \

--namespace jupiterone \

--set controllerManager.imageRegistry=myregistry.example.com \

--set 'controllerManager.imagePullSecrets[0].name=my-registry-secret' \

--set controllerManager.disableImageSignatureCheck=true

Or via a values file:

controllerManager:

imageRegistry: "myregistry.example.com"

imagePullSecrets:

- name: my-registry-secret

disableImageSignatureCheck: true

Verify Private Registry Configuration

After installation, check that the operator pod is running and pulling images from the correct registry:

kubectl get pods -n jupiterone

kubectl describe pod -n jupiterone -l control-plane=controller-manager | grep Image

Resource Requests and Limits for Integration Jobs

Many enterprise Kubernetes clusters enforce resource policies using Kyverno, OPA/Gatekeeper, or similar admission controllers. These policies often require all containers to declare CPU and memory requests and limits. By default, integration job containers created by the operator do not include resource requirements, which may cause job creation to be blocked by such policies.

Configuration

Configure resource requests and limits for integration job containers using --set flags:

helm install integration-operator jupiterone/jupiterone-integration-operator \

--namespace jupiterone \

--set controllerManager.jobResources.requests.cpu=100m \

--set controllerManager.jobResources.requests.memory=256Mi \

--set controllerManager.jobResources.limits.cpu=1 \

--set controllerManager.jobResources.limits.memory=1Gi

Or via a values file:

controllerManager:

jobResources:

requests:

cpu: 100m

memory: 256Mi

limits:

cpu: "1"

memory: 1Gi

When jobResources is not set, no resource requirements are applied to integration job containers (the default behavior).

Verifying

After configuring job resources, trigger an integration run and inspect the created Job:

kubectl get jobs -n jupiterone -o yaml | grep -A 10 resources

Troubleshooting Job Creation Failures

If integration jobs are not being created, check the IntegrationInstanceJob status:

kubectl get integrationinstancejobs -n jupiterone

The JobCreated column shows whether the Kubernetes Job was created. If it shows FAILED, inspect the full status for the error:

kubectl get integrationinstancejob <name> -n jupiterone -o jsonpath='{.status}'

Common causes:

- Admission webhook rejection — configure

jobResourcesto satisfy cluster resource policies. - Image pull failures — check

imagePullSecretsconfiguration.

Assigning an Integration

- Web

- Helm

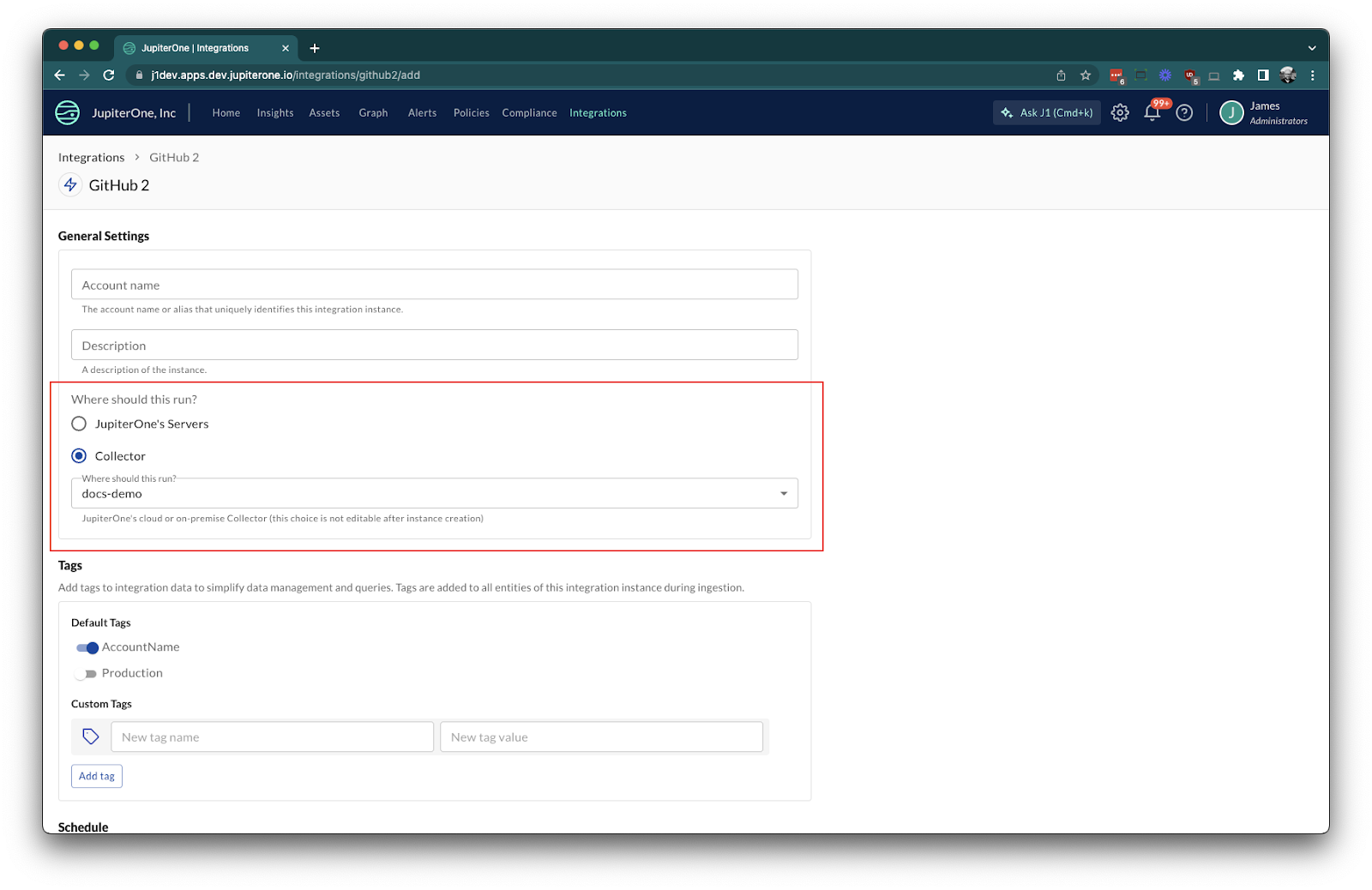

Assigning an integration job to a collector first requires that there are collectors registered and available. Once collectors are available, the process for defining an integration job and assigning it to a collector is straightforward.

For integrations that are collector compatible, complete the integration configuration as normal. During configuration, you'll notice there's an additional option to choose where the integration should run.

Select Collector on the integration instance, and choose the corresponding collector for which you'd like the integration to run.

You may also choose to setup an integration by using a helm chart. This is supported through the Custom Resource Definition integrationinstance which is installed as part of the Kubernetes Operator.

Integrations that support this method will have documentation on how to set this up. See the Kubernetes Managed integration for an example.

Updating the Operator

To update to the latest version:

helm repo update

helm upgrade integration-operator jupiterone/jupiterone-integration-operator --namespace jupiterone

Uninstalling

To remove the operator:

helm uninstall <runnerName> --namespace jupiterone

helm uninstall integration-operator --namespace jupiterone

kubectl delete namespace jupiterone

Multiple Clusters

Multiple Kubernetes clusters are supported by installing the JupiterOne Integration Operator and Integration Runner Helm charts on each cluster. Each cluster is managed independently by the operator running within that cluster.

To set up multiple clusters:

- Repeat the installation steps for each cluster where you want to run collectors.

- Each cluster will have its own Integration Runner and Integration(s) managed separately.

You may use automation tools such as ArgoCD, Flux, or other GitOps solutions to automate and manage Helm chart deployments across your clusters. This allows you to keep your collector deployments consistent and up to date in all environments.

Each collector is registered independently with JupiterOne, and integration jobs can be assigned to collectors in any cluster as needed.

ArgoCD

You may use ArgoCD to automate Helm chart deployments in your clusters. The following are example ArgoCD Applications setting up the Integration Operator and Integration Runner.

You may find the current version of the Integration Operator and the Integration Runner by searching the helm repository.

helm repo update

helm repo search jupiterone

Integration Operator

Replace <version> with the version you would like to install.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: jupiterone-integration-operator

namespace: argocd

spec:

project: default

source:

repoURL: https://jupiterone.github.io/helm-charts

chart: jupiterone-integration-operator

targetRevision: <version>

destination:

namespace: jupiterone

server: https://kubernetes.default.svc

syncPolicy:

automated:

prune: true

selfHeal: true

Integration Runner

Replace the <version>, <account-id> and <environment> with your own values.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: jupiterone-integration-runner

namespace: argocd

spec:

project: default

source:

repoURL: https://jupiterone.github.io/helm-charts

chart: jupiterone-integration-runner

targetRevision: <version>

helm:

values: |

secretAPITokenName: j1token

createSecret: false

accountID: <account-id>

jupiterOneEnvironment: <environment>

destination:

namespace: jupiterone

server: https://kubernetes.default.svc

syncPolicy:

automated:

prune: true

selfHeal: true

Integration Instance

You may also setup certain Integrations with Helm charts which are then supported in ArgoCD. Here is an example of setting up the Kubernetes Managed Integration. Replace <version> with the value from the latest chart.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: kubernetes-managed

namespace: argocd

spec:

project: default

source:

repoURL: https://jupiterone.github.io/helm-charts

chart: kubernetes-managed

targetRevision: <version>

helm:

values: |

collectorName: runner

pollingInterval: ONE_WEEK

destination:

namespace: jupiterone

server: https://kubernetes.default.svc

syncPolicy:

automated:

prune: true

selfHeal: true

Troubleshooting

If pods are not starting or integrations are not running:

- Check pod logs:

kubectl logs -n jupiterone <pod-name> - Verify CRDs are present:

kubectl get crd | grep jupiterone - Double-check authentication credentials

- Ensure network connectivity to JupiterOne services

Known Limitations

- Unable to migrate integration jobs between collectors.

- Integration job distribution across multiple pods is still being enhanced.

- Some integrations may not be compatible with collectors.